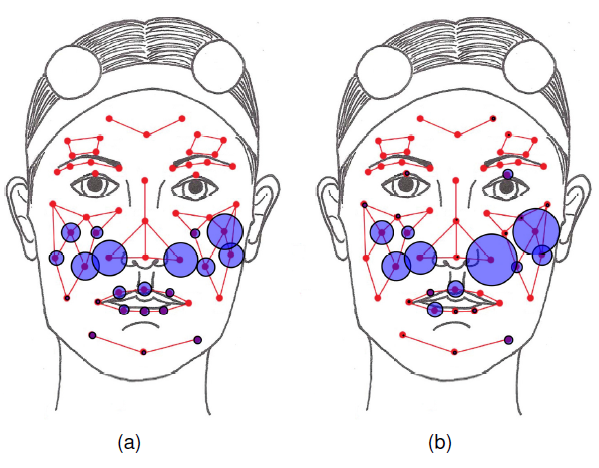

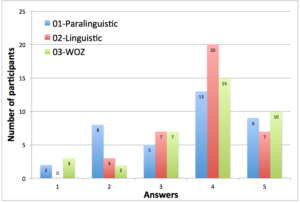

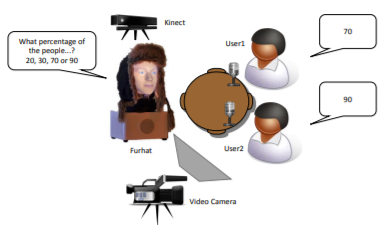

Multimodal Data Collection of Human-RobotHumorous Interactions in the JOKER Project

This paper presents a data collection of social interaction dialogs involving humor between a human participant and a robot. In this work, interaction scenarios have been designed in order to study social markers such as laughter.

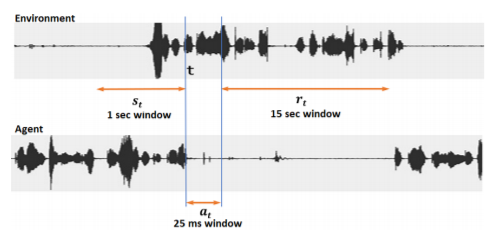

SPEECH DRIVEN BACKCHANNEL GENERATION USING DEEP

Q-NETWORK FOR ENHANCING ENGAGEMENT IN

HUMAN-ROBOT INTERACTION

We present a novel method for training a social robot to generate backchannels during human-robot interaction. We address the problem within an off-policy reinforcement learning framework, and show how a robot may learn to produce non-verbal backchannels like laughs, when trained to maximize the engagement and attention of the user.

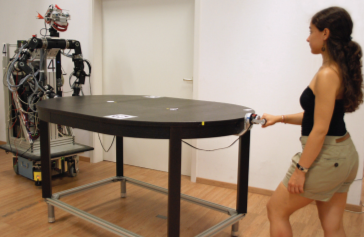

The role of roles: Physical cooperation

between humans and robots

Since the strict separation of working spaces of humans and robots has experienced a softening due to recent robotics research achievements, close interaction of humans and robots comes rapidly into reach. In this context, physical human– robot interaction raises a number of questions regarding a desired intuitive robot behavior.

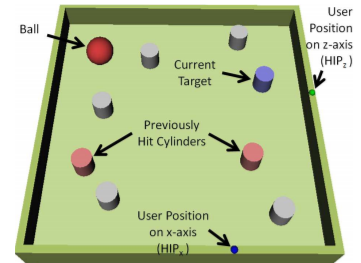

Haptic Negotiation and Role Exchange for Collaboration in Virtual

Environments

We investigate how collaborative guidance can be realized in multimodal virtual environments for dynamic tasks involving motor control. Haptic guidance in our context can be defined as any form of

force/tactile feedback that the computer generates to help a user execute a task in a faster, more accurate, and subjectively more pleasing fashion