Emotion Dependent Domain Adaptation for Speech Driven Affective Facial Feature Synthesis

August 26, 2020

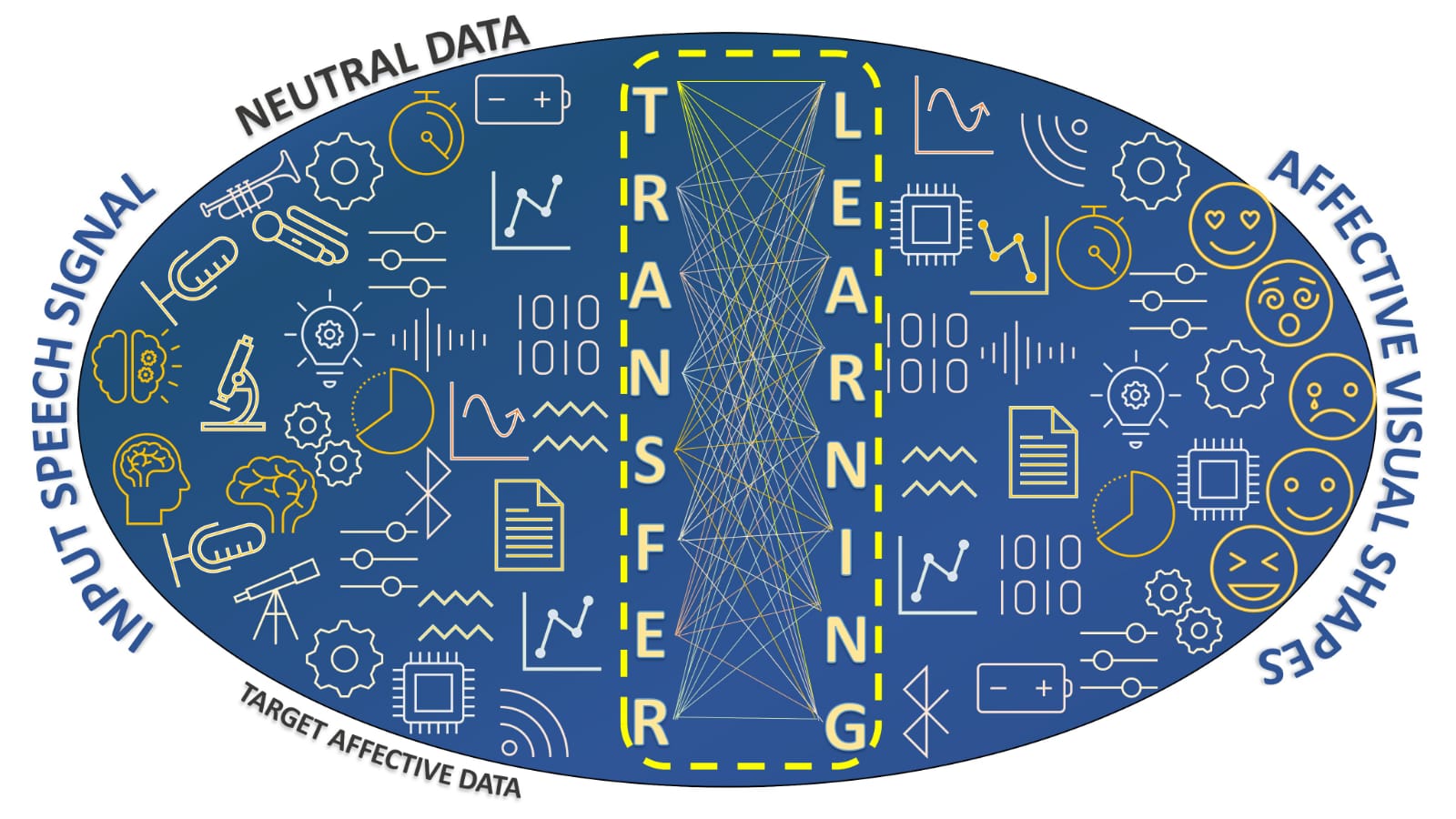

Although speech driven facial animation has been studied extensively in the literature, works focusing on the affectivecontent of the speech are limited. This is mostly due to the scarcity of affective audio-visual data. In this paper, we improve the affectivefacial animation using domain adaptation by partially reducing the data scarcity. We first define a domain adaptation to map affectiveand neutral speech representations to a common latent space in which cross-domain bias is smaller. Then the domain adaption is used to augment affective representations for each emotion category, including angry, disgust, fear, happy, sad, surprise, and neutral, so that we can better train emotion-dependent deep audio-to-visual (A2V) mapping models. Based on the emotion-dependent deepA2V models, the proposed affective facial synthesis system is realized in two stages: first, speech emotion recognition extracts softemotion category likelihoods for the utterances; then a soft fusion of the emotion-dependent A2V mapping outputs form the affectivefacial synthesis. Experimental evaluations are performed on the SAVEE audio-visual dataset. The proposed models are assessed withobjective and subjective evaluations. The proposed affective A2V system achieves significant MSE loss improvements in comparison to the recent literature. Furthermore, the resulting facial animations of the proposed system are preferred over the baseline animations in the subjective evaluations.